This is the blunt version of the server RAM market. I looked at the live ServerDimm catalog, cross-checked recent market data, and mapped where demand is really landing across DDR4, DDR5, ECC RDIMM, LRDIMM, and the early MRDIMM tier.

Demand is lopsided. Reuters reported in January 2026 that prices in some memory segments had more than doubled since February 2025 as AI infrastructure soaked up supply, and TrendForce said on March 31, 2026 that DRAM suppliers were reallocating capacity toward server applications, with conventional DRAM contract prices expected to jump 58% to 63% quarter over quarter in 2Q26. Does that sound like a sleepy commodity market to you?

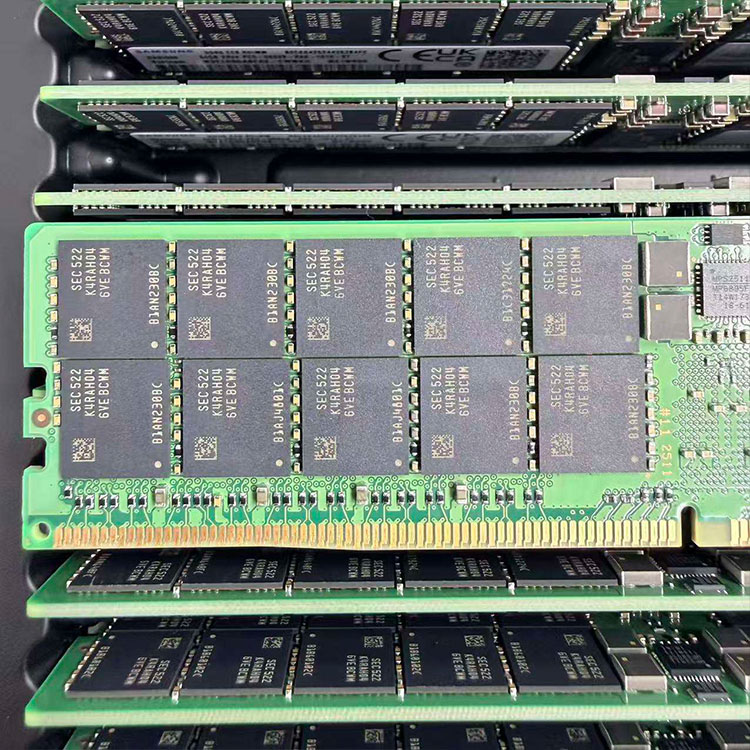

I checked the live ServerDimm structure before writing this. The site’s current DDR4 server memory catalog is loaded with 16GB, 32GB, and 64GB modules across Micron, Samsung, Kingston, and SK hynix, while the DDR5 server memory catalog jumps fast into denser parts such as Micron 64GB DDR5-5600 2Rx4, Micron 96GB DDR5-5600 2Rx4, and SK hynix 128GB DDR5-4800 2S2RX4. Sellers do not merchandise like that by accident; they do it because buyers keep asking for those shapes.

Here is my blunt answer. The real volume demand is split between 32GB and 64GB DDR4 ECC RDIMMs for installed-base support, spare pools, and budget-sensitive expansions, and 64GB, 96GB, and 128GB DDR5 ECC RDIMMs for new servers, denser virtualization hosts, AI-adjacent compute, and consolidation projects where memory-per-socket matters more than entry price. Why are so many buyers still wasting time pretending 16GB modules define the modern server memory market?

Old fleets linger. I have seen too many procurement teams talk like every rack is already on shiny next-gen silicon, while their real estate is still full of HPE Gen10, Dell PowerEdge, Lenovo ThinkSystem, and Supermicro boxes that want stable DDR4 ECC server RAM in boring, repeatable capacities. Who keeps those boxes running when finance says “not this quarter”?

That is why the site’s tested used server memory guide matters. ServerDimm’s own buying logic separates new inventory from used stock and treats DDR4 server memory as a distinct maintenance and replenishment problem, which matches how serious buyers actually behave: they chase exact-fit 32GB and 64GB ECC RDIMMs long after vendor marketing has moved on. Isn’t continuity usually worth more than a prettier box?

New platforms are skipping the kiddie pool. On the live catalog, ServerDimm’s DDR5 assortment leans toward 32GB, 64GB, 96GB, and 128GB parts, and Micron’s official data center memory page now treats 96GB and 128GB high-capacity RDIMMs as normal scaling options beside CXL and MRDIMM rather than exotic edge cases. Did you really think serious buyers were going to keep building around tiny DIMMs when rack density, power, and socket economics keep tightening?

Intel is saying the quiet part out loud. In its Xeon 6 product brief, Intel says Xeon 6 supports DDR5-6400 and that MRDIMMs can deliver more than 37% more memory bandwidth than RDIMMs at up to 8,800 MT/s, which tells me the center of gravity is moving upward: 64GB DDR5 RDIMM is the sensible starting point, 96GB is the clever density move, and 128GB is no longer a vanity buy for only the wildest racks. Who wants to explain to operations later that they saved a little money by under-sizing the only component every VM and data pipeline touches?

Capacity planning gets lied about all the time. If you read ServerDimm’s recent virtualization host memory sizing guide and its server memory compatibility checks, the subtext is obvious: buyers are not asking abstract memory questions, they are asking how to hit the right density without wrecking compatibility, power, or failure exposure. Why do so many vendors still write like buyers only care about one speed number and a photo of a heat spreader?

| Demand tier | Capacity and type | Typical speed bins | Who is buying it | Why it keeps moving | My read |

|---|---|---|---|---|---|

| Legacy fleet mainstay | 32GB DDR4 ECC RDIMM | 2666 / 2933 / 3200 MT/s | OEM support teams, spare-pool planners, staged refresh buyers | Cheap to standardize, easy to match, wide installed base | Still one of the safest volume plays |

| Legacy density workhorse | 64GB DDR4 ECC RDIMM | 2666 / 2933 / 3200 MT/s | Virtualization refreshes, memory-heavy older platforms | Better consolidation without forcing a platform migration | Quietly huge demand |

| New-platform default | 64GB DDR5 ECC RDIMM | 4800 / 5600 / 6400 MT/s | Enterprise rollouts, cloud nodes, general compute | Strong memory-per-core balance without immediate premium shock | The practical winner in fresh builds |

| Fastest-growing density tier | 96GB DDR5 ECC RDIMM | 5600 MT/s | AI-adjacent compute, analytics, denser virtualization | Better density and channel economics than overpopulating with smaller DIMMs | Smarter than many buyers realize |

| Premium density tier | 128GB DDR5 ECC RDIMM or LRDIMM | 4800 / 6400 MT/s | High-density hosts, in-memory databases, analytics clusters | Fewer DIMMs, less slot pressure, bigger per-socket footprint | Strong demand, but budgets feel it |

| Emerging bandwidth tier | 128GB / 256GB MRDIMM | up to 8800 MT/s | Intel Xeon 6 AI and HPC projects | Bandwidth, not just capacity | Real, but not yet mass-volume |

That table is my synthesis, but it is not guesswork. It lines up with the live ServerDimm category mix, Micron’s current emphasis on 96GB and 128GB RDIMMs, Intel’s Xeon 6 memory roadmap, and vendor guidance that keeps RDIMM at the center while pushing higher-density or higher-bandwidth modules into more specialized jobs.

Three words now. RDIMM still rules. Kingston flatly says RDIMM, LRDIMM, MRDIMM, and DDR4/DDR5 mixes cannot be combined in the same system, and Samsung frames RDIMM as the dependable enterprise default because it buffers signals, improves stability, and supports higher capacities than unbuffered memory. Why do I keep seeing buyers talk about RDIMM versus LRDIMM as if one is universally “better” instead of workload-specific?

My bias is simple. For mainstream server RAM, RDIMM is where most demand belongs because it hits the best balance of cost, availability, platform support, and density, which is exactly why the live ServerDimm assortment keeps surfacing DDR4 and DDR5 RDIMM-style parts in 32GB, 64GB, 96GB, and 128GB shapes instead of turning the whole catalog into weird specialist modules. Isn’t the boring answer usually the profitable one?

I hate fake equivalence. Samsung says LRDIMM reduces electrical load and is built for large memory requirements, while Kingston says LRDIMM is best in high-capacity enterprise servers, virtualized hosts, and data centers where maximum density matters; Intel, meanwhile, is pushing MRDIMM for bandwidth-starved Xeon 6 AI and HPC systems. So what is the real split? LRDIMM is the density tax you pay when the board, the workload, and the memory map all scream for more capacity, while MRDIMM is the speed tool you reach for when bandwidth becomes the bottleneck.

That is why I would not call LRDIMM the volume leader. I would call it the high-need, lower-volume part of the market, the thing you buy because slot pressure or per-socket memory ceilings leave you no sane alternative, not because somebody in procurement wanted to sound advanced in a meeting. Have you ever seen a clean LRDIMM deal that started with marketing language instead of a memory map?

Failure is pricey. Uptime Institute’s 2024 survey says 54% of significant outages cost more than $100,000 and 20% topped $1 million, while Google’s fleet study on DRAM errors in the wild found more than 8% of DIMMs were affected by errors per year. Still think server memory is the place to get casual?

The Alibaba and CUHK field study is even uglier. Their paper on DRAM errors and server failures in production data centers analyzed 250,000 servers, more than 3 million DIMMs, 75.1 million correctable errors, and 2,137 DRAM-driven server failures, and found that more than 40% of those failures showed correctable errors within one hour of the event. If that does not make you care about validation, matching, and screening, what exactly will?

That is why the internal linking strategy here should be practical, not decorative. When this article mentions server memory compatibility checks, quality testing and warranty workflow, virtualization host memory sizing, DDR4 server memory, DDR5 server memory, and the tested used server memory guide, it is doing the job of an honest B2B site: moving the reader from demand questions to compatibility, then to sourcing, then to validation, then to quote intent. Why send a buyer straight to a product grid before answering the question that can kill the install?

The server memory capacities drawing the most real-world demand in 2026 are 32GB and 64GB DDR4 ECC RDIMMs for installed-base maintenance, plus 64GB, 96GB, and 128GB DDR5 ECC RDIMMs for new builds, virtualization hosts, AI-adjacent compute, and higher-density consolidation projects. I would treat that as the modern demand stack unless a buyer is chasing a very specific edge case.

DDR4 server memory is still worth buying when the job is maintenance, spare-pool coverage, or staged expansion on existing platforms, because exact-fit 32GB and 64GB ECC RDIMMs usually beat forced platform replacement on cost, lead time, and operational risk for older enterprise fleets. I would not confuse “older” with “irrelevant”; the live catalog mix says the market does not confuse those two things either.

RDIMM is the default enterprise server memory for balanced cost, stability, and availability, while LRDIMM is the better fit when your server platform supports it and your workload needs larger total memory footprints, denser socket population, or fewer compromises in memory-heavy virtualization, analytics, and database nodes. The mistake is treating them as interchangeable; Kingston is explicit that they cannot be mixed and many systems will not boot if you try.

128GB DDR5 RDIMM makes sense when CPU core counts, VM density, AI-adjacent preprocessing, in-memory analytics, or licensing economics reward more memory per socket, because higher-density modules can cut slot pressure, simplify channel planning, and reduce the ugly trade-off between consolidation goals and available motherboard real estate. I would look at 128GB first when 64GB feels merely adequate and 96GB still leaves the host cramped.

MRDIMM is not mainstream volume memory yet; it is an emerging DDR5 server memory type aimed at bandwidth-constrained AI and HPC systems, especially on Intel Xeon 6 platforms, where higher transfer rates matter more than the procurement habits of ordinary enterprise refresh cycles. I take it seriously, but I would not pretend it has already replaced RDIMM as the everyday enterprise default.

Start brutally. Audit the fleet you actually have, separate legacy maintenance from new-platform rollout, and stop pretending one server RAM strategy covers both. Then route the buyer journey the right way: first read the server memory compatibility checks, then compare the live DDR4 server memory catalog and DDR5 server memory catalog, then use the virtualization host memory sizing guide or the tested used server memory guide to narrow the commercial path, and only after that lean on the quality testing and warranty workflow and contact page to move into quote mode. That is how I would build this funnel, and honestly, it is how more hardware sites should have been built years ago.

ServerDimm supplies new and used branded server memory for distributors, OEM buyers, resellers, and data center teams. We support DDR4 and DDR5 sourcing with tested inventory, compatibility checks, and responsive quote service.

Copyright © 2026 Shenzhen Lux Telecommunication Technology Co.,Ltd. All rights reserved